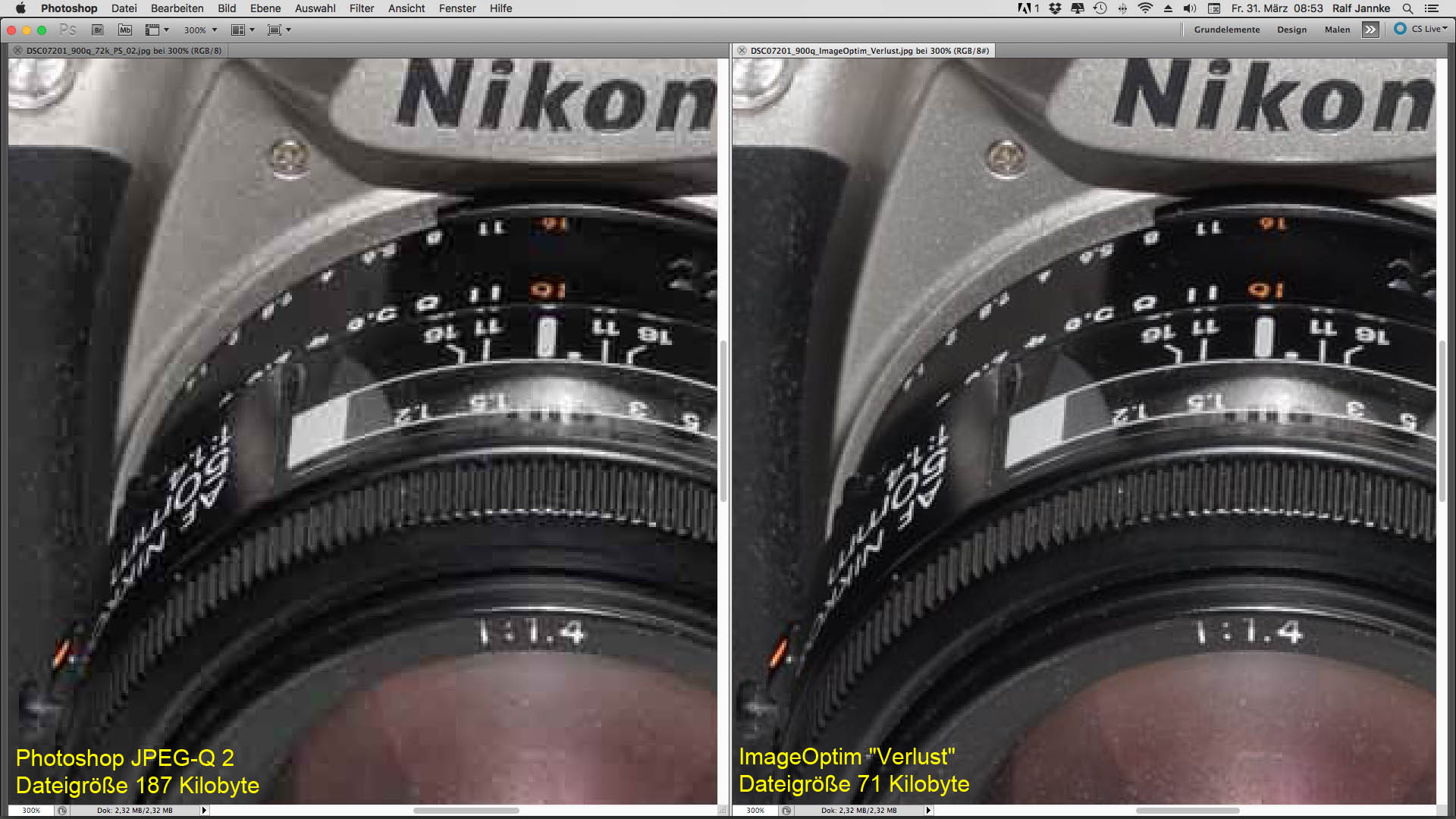

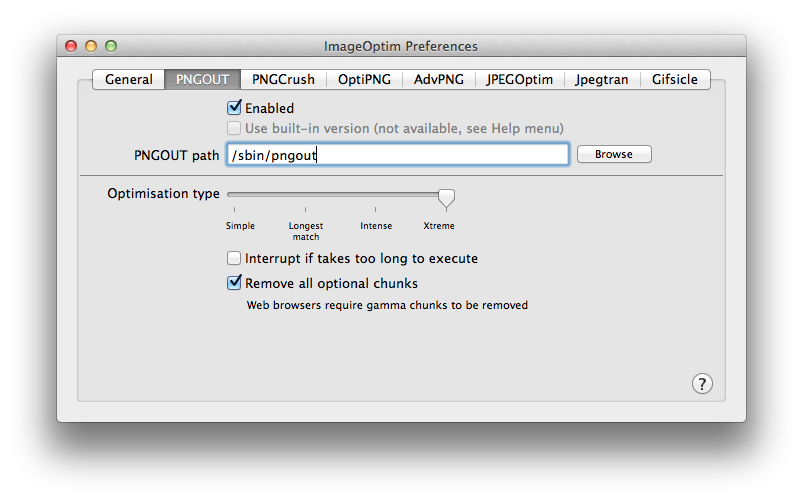

Strip PNG metadata (gamma, color profiles, optional chunks) It's slightly faster not to preserve permissions. This ensures that all hardlinks, aliases, file ownership, permissions, extended file attributes will stay the same. Instead of deleting old file writing a new one with the same name, just replaces old file's content in-place. Ausweg Sonderweg on „Wetten, dass.Preserve file permissions, attributes and hardlinks.Tristan Hergens on "TV total" Neuauflage mit Sebastian Pufpaff.Andy on Extract subtitles with ffmpeg from a.Antoniocrown on What is: doesn't support DPO or FUA?.goeszen on What is: doesn't support DPO or FUA?.Epson printer suddenly stops working on Ubuntu 20.04 Linux.Keyboard is obscuring button in Android apps.Largest floppy disk manufacturers world-wide.Fix a corrupted MP4, not with ffmpeg but untrunc.I want to have “animated JPEGs”! (a manifest).99% and 100% (GIMP) won't buy you anything and file size may double. For everyday use, select something between 70-85% (GIMP), and for high quality images something around 10 (PS) or 95-98% (GIMP). Often mozjpeg is mentioned as a very capable alternative.ĭon't use best quality (setting 12 in PhotoShop or 100% in GIMP). Coming from lossless?įor completeness, when you have access to the uncompressed (lossless) source image (like a RAW or PNG/TIFF/.), try to use the most recent libjpeg library for an efficient compression. So that's what you can do (not much) to save a few bytes. The PAQ compressor and companion ZPAQ archiver implements an algorithm designed for packing JPEGs.ħz offers " method 60: jpeg" as part of its pluggable compressor architecture, but I couldn't get it to work and so can't say anything about what it does, if it works, or if it's meant to compress JPEGs in the first place. WinZip seems to use an effective way of treating JPEGs, but I haven't tested it PJG files which no viewer I know of is able to display. PackJPG (now github, originated from Hochschule Aalen) (here's a GUI) is designed to compress JPEG files and can achieve 20% size reduction, but resulting files are. crop the image and/or wipe less important areas with jpegtranĪfter that, you can compress files even further but only by wrapping them in another file format, so an image viewer will not be able to instantly display such files (without extracting them from an archive).optimize (declutter) the file structure, with jpegtran or jpegoptim.For completeness it should be mentioned that you can also crop images losslessly with jpegtran. What you can do with JPEGs is blanking certain parts of an image, wiping them with all gray. In jpeg2000 interesting parts of an image can be compressed with a higher quality setting, while less important parts can be heavily compressed. Sadly, plain classic JPEG (in contrast to JPEG2000) does not offer selective compression. See the post about executing a command on each file from a file glob for how this is done. This command will traverse the current and all sub directories, finding files ending in. type f -name "*.jpg* -execdir jpegoptim -all-progressive -v -strip-all -max=85 -preserve '' \

tags and in case it's a JPEG with quality > 85%, it will be re-compressed in a lossly way to 85% ("another generation"). This will work (-v verbosely) on file test.jpg, overwriting it with a progressive JPEG stripped of all EXIF, etc. Jpegoptim -all-progressive -v -strip-all -max=85 -preserve test.jpg

Let's hope it does so by using forensics like documented here, here or elsewhere?) (I don't know if or how the tool determines the original quality setting used. Further, it is able to apply lossy re-compression when files are compressed with a quality setting above a certain threshold. Jpegoptim seems to be more of a batch processing tool, which provides most features of jpegtran. Progressive images are not streamable (means a viewer has to keep the whole image in memory for decoding) yet they are usually smaller. Jpegtran, which is part of libjpeg, allows you to remove unnecessary comments and tags from images, is able to optimize Huffman tables, and can convert images to progressive. This stackoverflow question outlines a few. This page here has a comparison of compression options for JPEGs.

And more effective coding schemes, like arithmetic coding (saving around 10%), became available a few years ago (due to an expired patent) yet decoder libraries too seldomly support it to allow a widespread use.Įven when your sacrificing "immediate viewability" by using a file compressor/archiver, you'll soon find out that JPEGs don't compress well. (There's no such tool named OptiJPEG, I made that up.) The Huffman coding compression scheme can't be optimized to a similar extent. JPEG images on the other hand can't be optimized the same way.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed